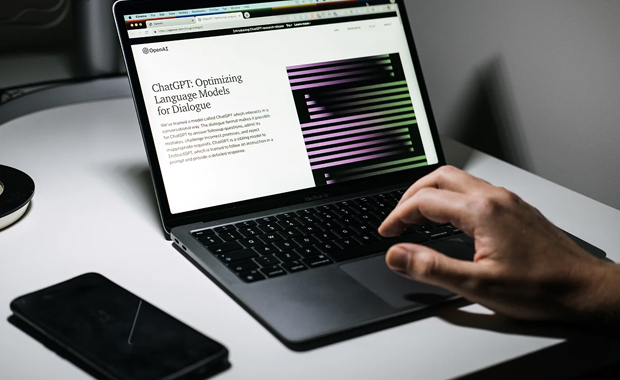

There has been a lot of excitement and attention given to Generative AI in a very short amount of time. The most exciting example is ChatGPT, the text-to-text generative AI tool built on the GPT-3 large language model, released November 2022 by Open AI. ChatGPT is optimized to answer questions, carry on a dialogue, and generate a large range of responses – from text, to code, to investment pitches, to Hollywood scripts, to opinion pieces that you’d read in major newspapers. If there’s one thing ChatGPT has most certainly generated, it’s buzz.

But amid the buzz, there are boosters, detractors, and realists. ChatGPT is one of many text-to-text generative AI tools and there are some who say it’s not even one of the best. For agencies looking to determine how or whether to use ChatGPT or another generative AI tool, there are a number of considerations to help guide your thinking.

First, the quality of a ChatGPT response is determined by two key factors: whether it has been trained on related information and the specificity of the prompt. Domain-specific information, such as specific terms or contextual meanings, requires additional model training and tuning. As for prompts, the more detailed, the better the response will be. Think of ChatGPT as a Google search that generates answers in full text, as opposed to links. And like a search, not all ChatGPT responses are accurate. ChatGPT’s responses are well crafted text – they read well – but they may be absolutely wrong.

At Techvedic, we used ChatGPT to ask detailed technical questions and achieved very good results. Some of our prompts included: How do I containerize a predictive model for deployment to an edge device? How do I train a predictive model given a highly skewed distribution of target outcomes? How do I remove bias from training data when training a classifier? Again, we were impressed with the results but were on the lookout for errors and missed context.

Like any other tool, ChatGPT is limited to what it knows and has been trained on. And it has been trained to predict and generate content based on billions of example texts.

It cannot understand inferences in language. It can’t do math. It can’t perform logic. In one example discussed on a recent edition of New York Times reporter Ezra Klein’s podcast, ChatGPT was asked about “the gender of the first female president.” Any human reading that question would come to the logical conclusion that the gender of the first female president will be … female. Instead, ChatGPT gave a response about assumptions, equality, and gender as a construct. The response was very inclusive, articulate and well composed but it was, at the end of the day, incorrect.

This is something that concerns many people about generative AI – at least in its early stages, as we are in today. ChatGPT can be persuasive, regardless of whether it is correct. The idea that incorrect content will be accepted as truth and be used to create false advertising and misinformation is a scenario that is not too difficult to imagine.

Despite the limitations and concerns, there are clear opportunities where ChatGPT has the potential to positively transform how agencies get work done, just as with automation several years ago. Agencies looking to evaluate ChatGPT today, and perhaps other generative AI tools in the future, should test out these tools across a variety of use cases.

As examples, agencies can ask ChatGPT detailed technical questions and gauge the results. They can use it to generate or debug code, or to detect security issues in code. ChatGPT can perform service desk or help desk chat basics, and it can generate scripts to containerize code, deploy a virtual machine, run scans, or perform other repetitive configuration tasks. It can also reverse engineer shellcode and much, much more.

To be certain, the AI in ChatGPT is good and ready to use today, but it can get better. And it will. That’s the wonderful thing about where we are today as it relates to generative AI. There are so many emerging new tools, and there will be many more to come. Before ChatGPT was all the rage with text, DALL-E got the same buzz for images.

No matter the tool, AI will always be an accelerator and a quality enhancer, but it will never be able to give us answers to every imaginable problem. There will always be a market for human ingenuity, and when it is combined with technology like AI and others, we really start to delve into the art of the possible.